[This post is an excerpt from a blog by Epic Games. Read the full post here.]

The simulation industry is undergoing a major pivot, moving from building tools that train humans to building tools that train machines. This requires AI systems that operate, perceive, and interact directly with the real world. Enter Physical AI. By combining AI models with sensors, cameras, and actuators, these systems, such as robots, autonomous vehicles, and drones, understand, reason, and act in real time, adapting to unpredictable environments. Unreal Engine is becoming the go-to platform for robotics teams, not just as a simulator, but as a synthetic data factory and real-time autonomy platform powering the next generation of robotics systems.

Photorealism as a technical requirement

Training a robot to operate in the real world requires vast amounts of data: annotated images for perception, physics interactions for control policies, and edge-case scenarios for safety validation. Collecting this data in the physical world is slow, expensive, dangerous, and fundamentally limited: you can’t stage a thousand car crashes, simulate a hurricane inside a warehouse, or replay a surgical complication on demand. This is why simulation has become the backbone of modern Physical AI development.

With simulation non-negotiable, the real question is, “Which platform delivers the best perception performance at the lowest cost and fastest iteration speed?”

For robotics teams, Unreal Engine is increasingly the answer. That’s because in robotics simulation, photorealism is more than a nice-to-have: it is a technical requirement. The engine’s powerful graphical capabilities make it the best option for closing the “sim-to-real gap”: the gap between what a model sees in simulation and what it encounters on a physical robot. The logic is simple: the more your synthetic images look like real photographs, the less additional work your model needs to generalize from simulation to reality.

Unreal Engine 5 introduced three technologies that fundamentally changed the game on this front not only delivering photorealism, but doing so in real time.

Nanite virtualized micropolygon geometry renders extremely high resolution assets in real time ideal for CAD imports, photogrammetry scans, and dense industrial environments.

Lumen dynamic global illumination calculates virtually infinite light bounces and indirect reflections in real time, enabling physically accurate lighting that reacts to changes in direct lighting (such as a light turning on) or geometry (such as a door opening).

And hardware ray tracing, including NVIDIA’s RTX-accelerated branch, delivers physically accurate reflections, shadows, and material responses.

[...]

Synthetic data for fast and cost-effective training

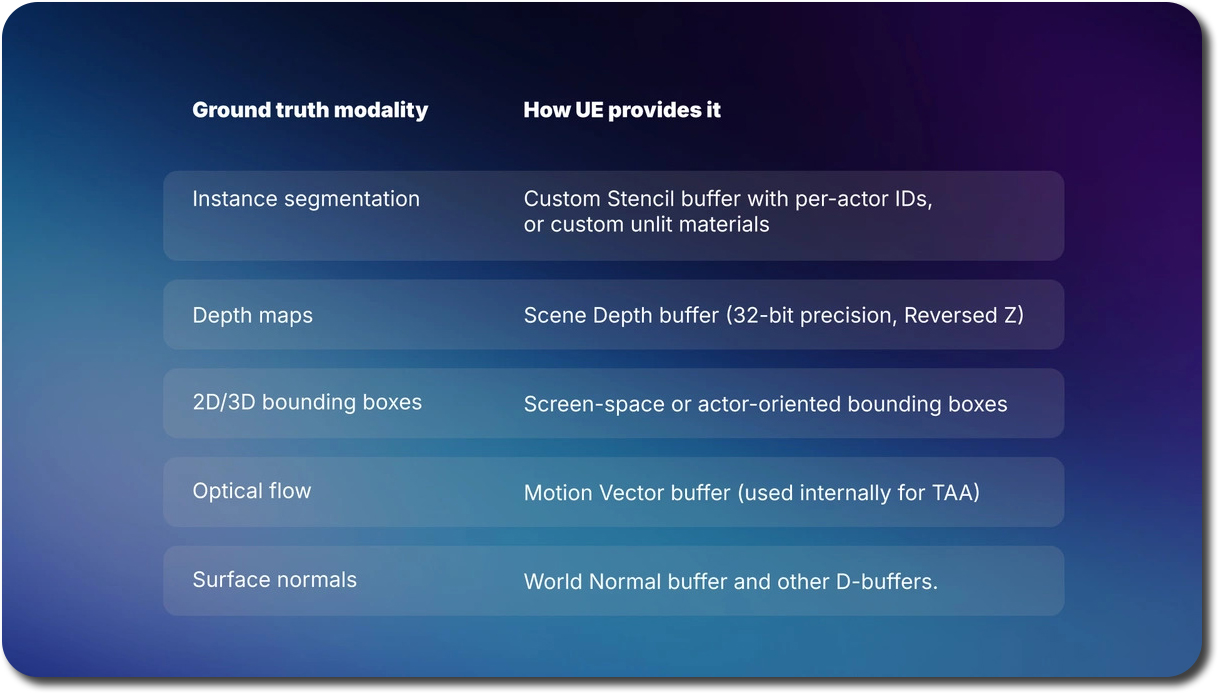

Because Unreal Engine generates every pixel mathematically, it already knows everything about the scene, where every object is, how far away it is, what material it has, and how it’s moving. So instead of requiring humans to label images after they’re captured, the engine can automatically output highly accurate labels at the same time it renders the image.

That has a number of advantages: no disagreements between annotators; no inconsistent labels in a dataset (for example, a forklift labeled as a truck); and no need for an expensive quality-control loop. What’s more, the economic impact is irrefutable: training simulations on synthetic data significantly reduce the cost per image when performed at scale.For most perception pipelines, the economic crossover point where synthetic data becomes more cost-effective than manual annotation arrives far earlier than teams expect, often after only tens of thousands of images. With most robotics models needing millions of images, that means that opting to use synthetic data can result in huge cost savings.

This story is writ large in the market signals we’re seeing—synthetic data is projected to become a dominant component of AI training pipelines.

Duality AI leverages these Unreal Engine capabilities for its Falcon digital twin platform, generating high-fidelity synthetic data and sensor simulations for enterprise customers, who leverage them across a variety of industries and use cases.Investing in these digital twins translates into measurable ROI in terms of rapid training and deployment of AI models to safely fly drones, navigate reliably in the wilderness and even dock orbiting satellites. In each of these scenarios, Falcon’s digital twin environments and virtual sensors deliver highly accurate synthetic data proven to close the sim-to-real gap. “That would not be possible without Unreal Engine’s advanced rendering capabilities, physics solvers, scalable scene management, and well-established dual use development community,” says Apurva Shah, CEO of Duality AI.

[This post is an excerpt from a blog by Epic Games. Read the full post here.]