The ubiquity of Synthetic Aperture Radar (SAR) continues to expand in modern infrastructure maintenance, military and border surveillance efforts. The ability to image vast areas through cloud cover and darkness, produces data that no optical sensor can replicate. This has necessitated better tools for interpreting SAR data at scale, and building AI models for that purpose — with significant synthetic data needs to ensure precise performance.

Last year, to meet this need, Duality introduced Falcon’s Virtual SAR Sensor: a physical simulation of how SAR operates, generating accurate imagery along with ground truth and automatic, pixel-perfect labeling. Since the original release, this SAR sensor has evolved to include a wider range of operational profiles across terrestrial and marine scenarios, with additional control over parameters like antenna gain and beam lobe attenuation. All these advances have enabled our customers to better model radiometric effects and sample returns that closely match real-world behavior.

Let's take a look at some recent examples from Falcon's virtual SAR sensor:

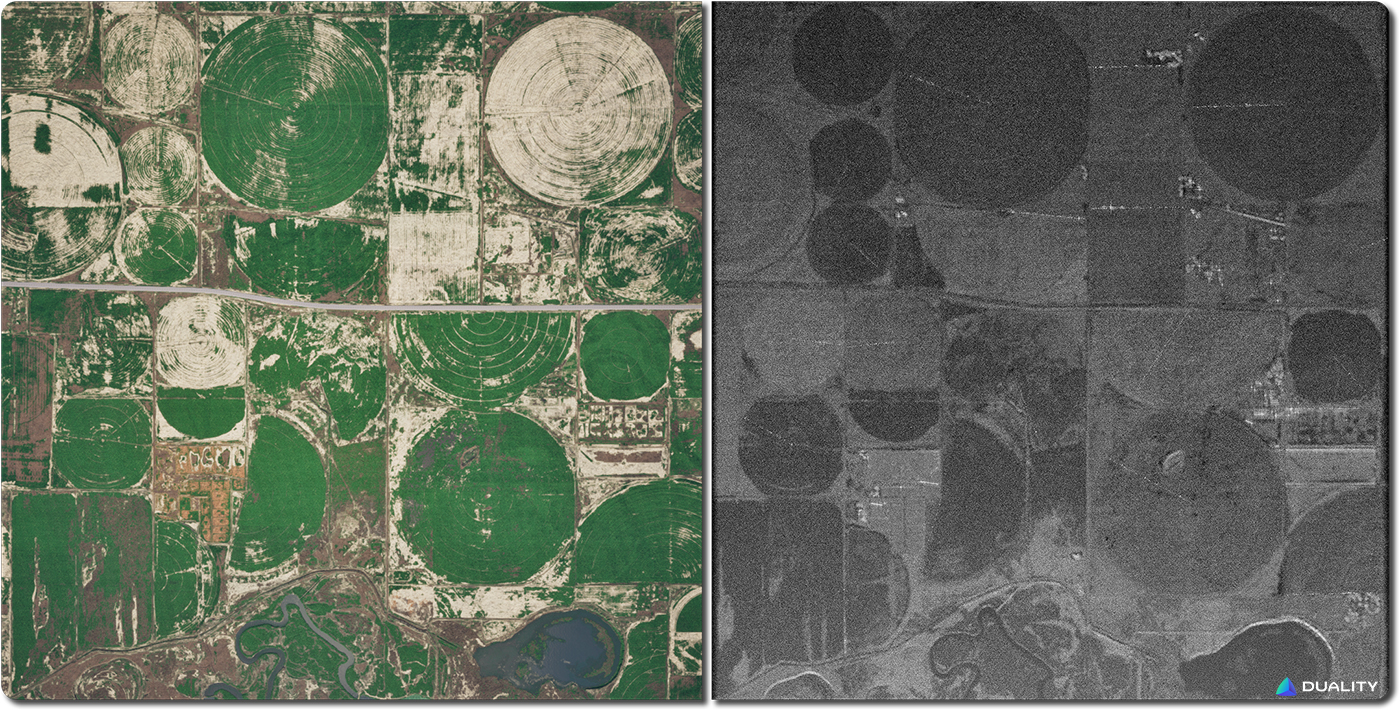

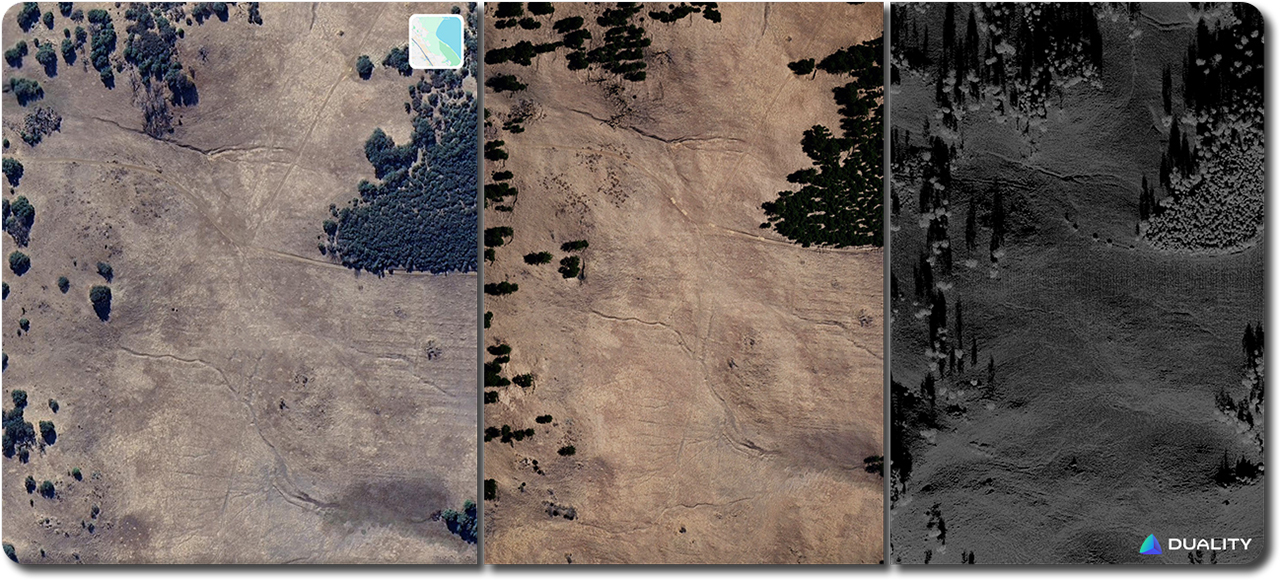

Terrestrial Example

First let's look at real imagery of an environment and the resulting real world SAR product, and then we'll compare it to Falcon's digtial twin versions.

Real

SAR Parameters

- Resolution — 80 cm

- Mode — Spotlight

- Azimuth — 97.8°

- Grazing angle — 40.3°

- Aperture time — 1s

Digital Twin + Virtual SAR Product

Falcon Virtual SAR Sensor Parameters:

- Resolution — 30 cm

- Mode — Spotlight

- Elevation beam spread — 21°

- Azimuth beam spread — 13°

- Grazing angle — 30°

- Speed — 100 m/s

- Aperture time — 1s

- Generation time — 122.8s

- Raw sample count — 191423460

- Samples per capture — 3189655

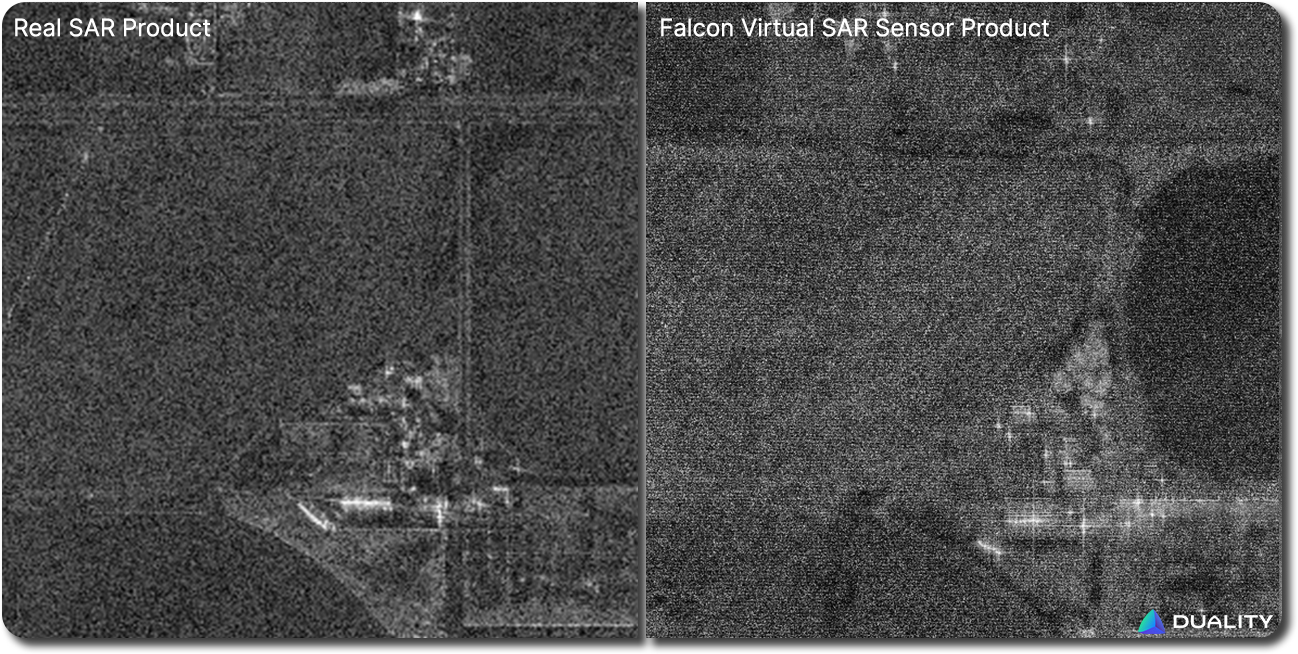

Let's take a closer look at the real and virtual SAR products:

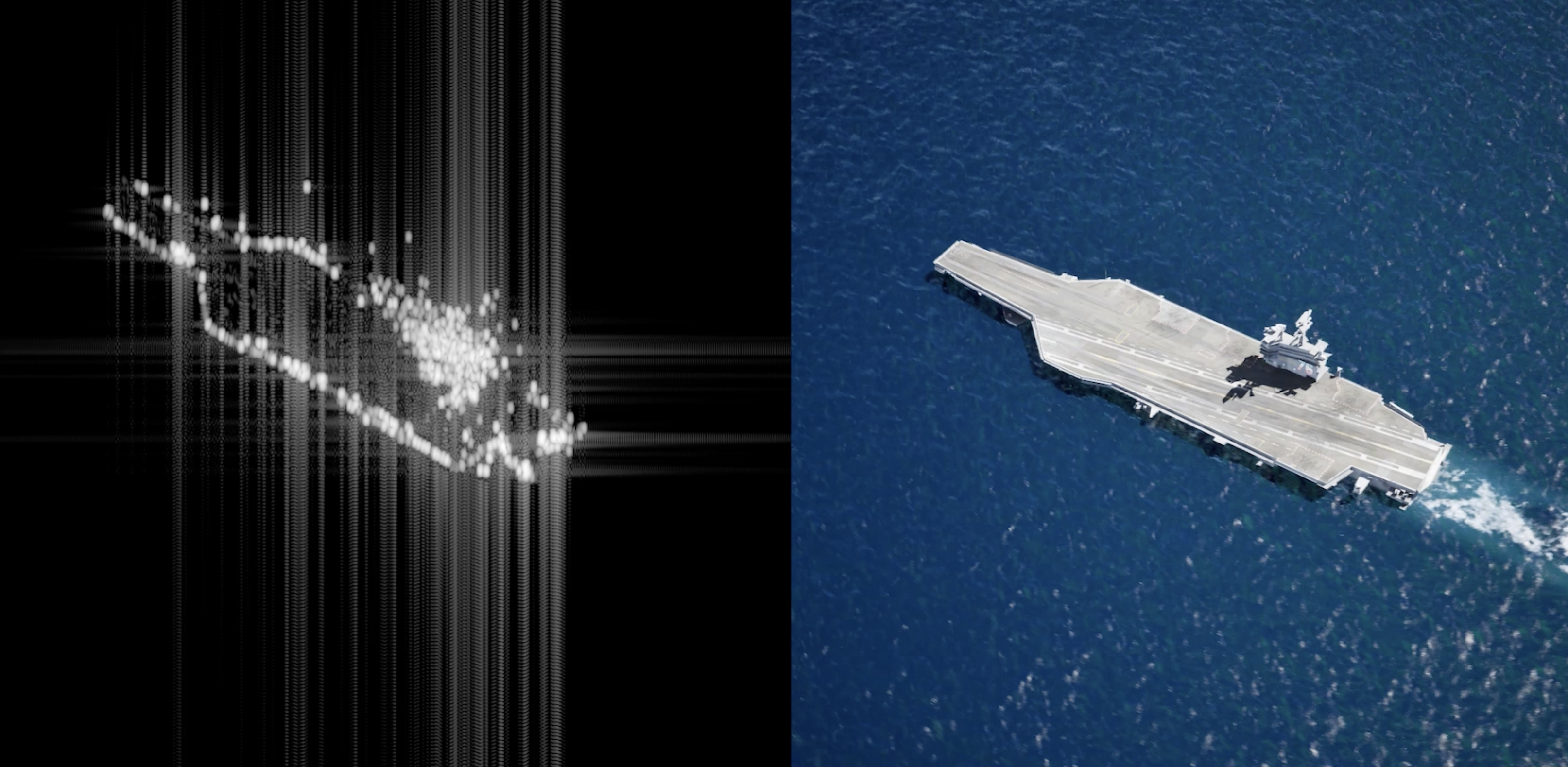

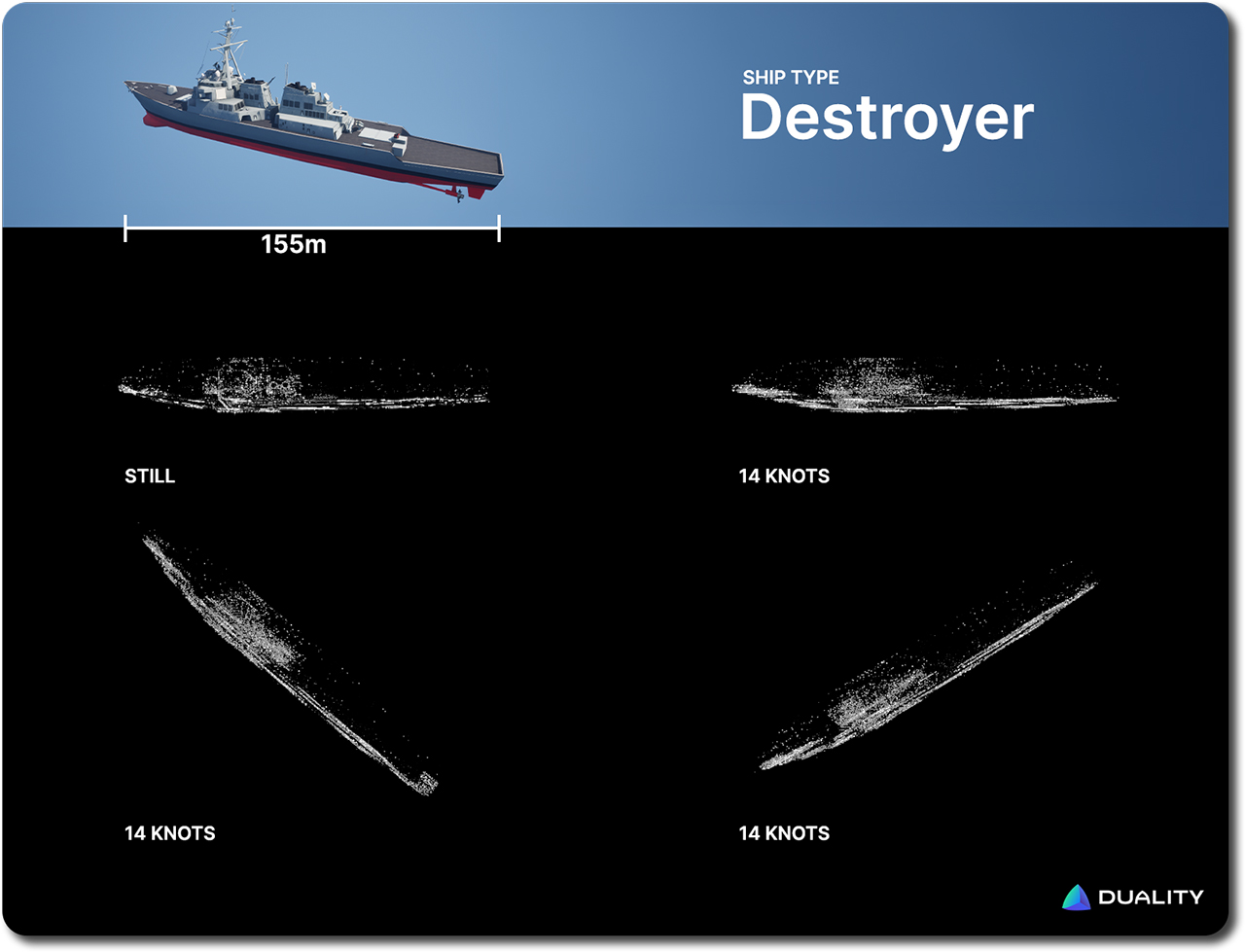

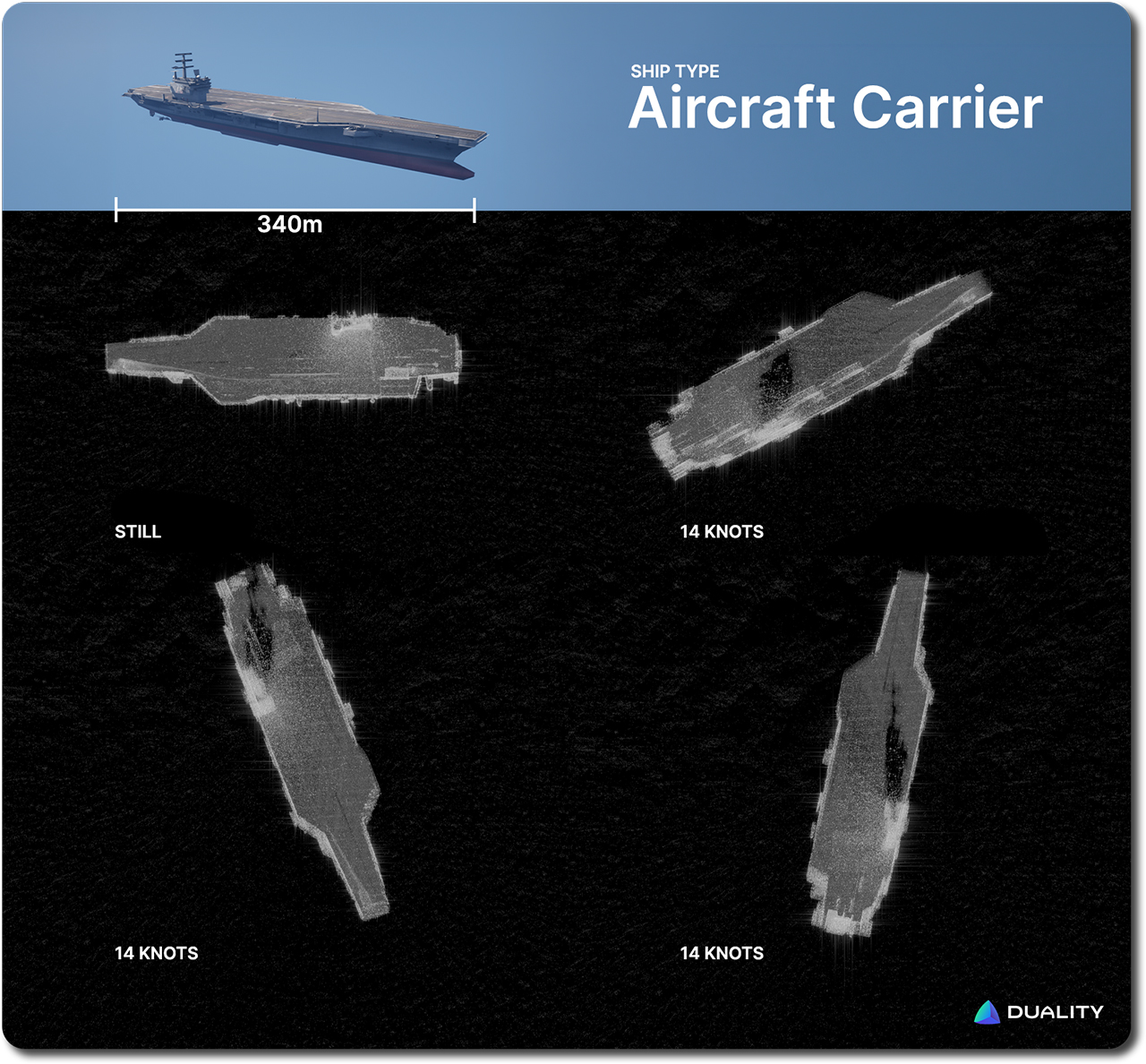

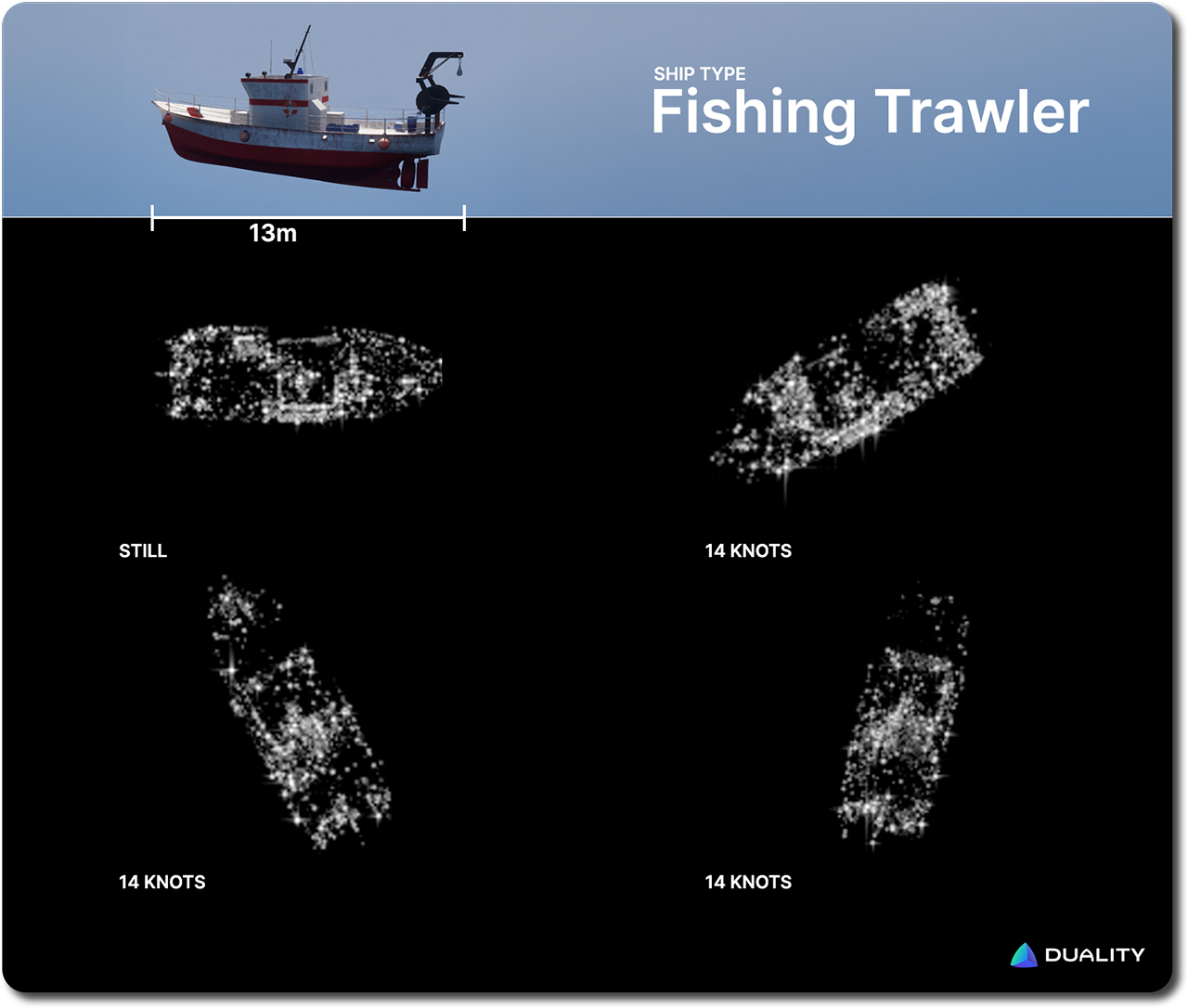

Marine Examples

Next, we present examples of three marine vessels, at a standstill and in motion.

Parameters:

- Resolution — 30 cm

- Mode — Stripmap

- Elevation beam spread — 7°

- Azimuth beam spread — 3.5°

- Grazing angle — 17°

- Speed — 100 m/s

- Aperture time — 1s

- Generation time — 281.22s.

- Raw sample count — 716388240

- Samples per capture — 11939840

Parameters:

- Resolution — 30 cm

- Mode — Stripmap

- Elevation beam spread — 7°

- Azimuth beam spread — 3.5°

- Grazing angle — 17°

- Speed — 100 m/s

- Aperture time — 1s

- Generation time — 281.22s.

- Raw sample count — 716388240

- Samples per capture — 11939840

Parameters:

- Resolution — 10 cm

- Mode — Stripmap

- Elevation beam spread — 7°

- Azimuth beam spread — 3.5°

- Grazing angle — 45°

- Speed — 100 m/s

- Aperture time — 1s

- Generation time — 16.61s.

- Raw sample count — 823740

- Samples per capture — 13667

While Falcon’s SAR capabilities will continue to evolve based on input and validation from our customers, in this blog we want to take a look at the key factor that matters for getting high quality synthetic data: the digital twin environment the sensor operates in.

A Virtual Sensor Is Only As Good As Its Virtual World

When teams build synthetic data pipelines for physical AI training, the focus tends to fall on sensor fidelity. Does the simulated radar match the real one? Are the antenna patterns right? Is the backscatter modeled correctly? These are critical questions, and, as we demonstrated above, Falcon's SAR implementation handles them rigorously, with physically-based modeling you can validate against real-world returns.

But a SAR beam can sweep across dozens of square kilometers in a single pass. It sees roads, buildings, vegetation, rocky terrain, ships at sea, and a thousand other objects — all at once. Generating valuable synthetic data means getting all of that right, not just the sensor – in other words the electromagnetic reflectivity of the dynamic world that the SAR is imaging.

A virtual sensor’s output is only as good as the virtual world it can gather data from. That's the key point that often gets overlooked, especially when it comes to synthetic data for AI training and validating SAR post-processing pipelines to handle diverse real world context. The video below explores the site twin that was used to generate the Centerfield, UT, SAR product above.

A Unified World That Works Across The Full Sensor Stack

Falcon's approach starts with our Geospatial Pipeline: elevation data, satellite imagery, and other data streams are combined with AI semantic segmentation to map the spatial distribution of objects across large environments. The result is a rapidly-generated, high fidelity digital twin that, right out of the box, can be used to generate accurate physically-motivated synthetic data for virtual sensors ranging from EO, to thermal, to LiDAR, and more.

Above, we see another example of how a Geospatial Pipeline generated site twin is immediately used to generate a virtual SAR product. Key to the benefits unlocked by this digital twin approach is that we can also easily introduce new variables into this environment, that will likewise be immediately reflected in the resulting SAR product (below).

Even more consequentially, the same site twin that generates the SAR data also generates electro-optical imagery, infrared data, segmentation maps, and more — across a near-infinite variety of viewpoints, conditions, and scenarios. A new environment is never needed for any sensor modality. Just one unified twin, modeled with the physical rigor to support all of them. The video below showcases just a sample of viewpoints and sensors all generating concurrent and time-synced data.

That matters because real-world surveillance isn't single-modal. The goal of any advanced system is to fuse multi-modal information from multiple perspectives into a coherent understanding of what's happening in a complex, dynamic scenario. A SAR pass carries vital information. Thermal imaging from a drone adds additional puzzle pieces. Finally, EO feeds from ground level vehicles round out the datascape.

Understanding how those sensor streams align and complement each other — and training models to work with fused data — requires a platform that can generate all of them from the same ground truth deterministically and with synchronized timestamps.

That is exactly the workflow Falcon enables. The same environment, the same scenario, time synchronization — rendered coherently across a full suite of sensors. The investment in rigorous environment modeling isn't overhead. It's what makes synthetic data truly valuable.